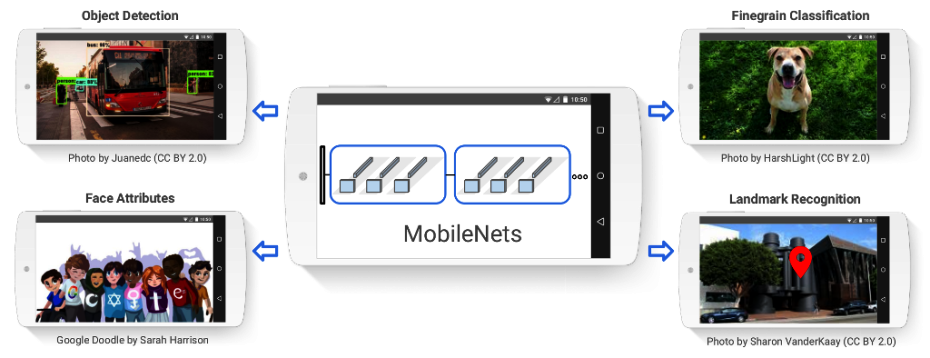

Internet giant, Google has announced the release of MobileNets which is a series of TensorFlow visual recognition models built for some low-power, low-speed platforms such as smartphones and tablets. The introduction of the new method MobileNets allow smartphones and other smart devices to understand the environment in some better way. It will also help you to guess the things in much better way, based on the location.

Right now, lot of machine learning inside mobile app works by passing data off to cloud based supercomputers/servers for processing and then providing resulting insights to user once they return over the network. This approach has some disadvantages such as device needs working data connection all the time. This approach is time consuming because of time taken by communication between server and client and also involves risk of data piracy. With MobileNets, an app can process data on device itself and hence it is faster and more secure. Google’s MobileNets will definitely make tools like Google Lens more functional and accurate. Also it is useful for Google Photo, which might be able to pre-process images we take to determine their content.

This new software from Google is also open source. You can read more about MobileNets here. If you are a developer, you can select pre-trained model that vary in size and accuracy to best suit what application developed and can deploy the models onto Android, iOS and Raspberry Pi using TensorFlow Mobile. With MobileNets, Google is building future of machine learning on a smartphone.